I’m often surprised at how casual so many communities are about who they let in. To add people to your membership is to steer your community in a new direction, and you should know what direction that is. There’s nothing more powerful than a group of aligned people, and nothing more difficult than steering a group when everyone wants something different for it. I’ve seen bad decisions on who to include ruin many communities. And, on the other hand, being intentional about it can have a transformative effect, leading to inspiring alignment and collaboration. The best collaborations of my life have all been in discerning communities.

So what does it mean to be intentional about membershipping? You could say that there are two overall strategies. One is to go slow and really get to know every prospective member before inviting them fully into the fold. The other is to be very explicit and providing narrow objective criteria for membership. These both have upsides and downsides. If you spend a lot of time getting to know someone, there will be no surprises. But this can produce cliqueishness and cronyism: who else have you spent that much time with than your own friends? On the other hand are communities that base membership on explicit objective criteria can be exploited. A community I knew wanted tidy and thoughtful people, so would filter people on whether they helped with the dishes and brought desert. The thinking was that a person who does those things naturally is certainly tidy and thoughtful. But every visitor knew to bring desert and help with the dishes, regardless of what kind of person they were, so the test failed as an indicator.

We need better membershipping processes. Something with the fairness and objectivity of explicit criteria, but without their vulnerability to being faked. There are lots of ways that scholars solve this kind of problem. They will theorize special mechanisms and processes. But wouldn’t it be nice if we could select people who just naturally bring desert, help with dishes, ask about others, and so on? Is that really so hard? To solve it, we’re going to do something different.

The mechanism: the double-blind policy process with collective amnesia

Amnesia is usually understood as memory loss. But that’s actually just one kind, called retrograde amnesia, the inability to access memories from before an event. The opposite kind of amnesia is anterograde. It’s an inability to form new memories after some event. It’s not that you lost them, you never got them in the first place. We’re going to imagine a drug that induces temporary anterograde amnesia. It prevents a person from forming memories for a few hours.

To solve the problem of bad membershipping, we’re going to artificially induce it in everyone. Here’s the process:

- A community’s trusted core group members sit and voluntarily induce anterograde amnesia in themselves (with at least two observers monitoring for safety).

- In a state of temporary collective amnesia, the group writes up a list of membership criteria that are precise, objective, measurable, and fair. As much as possible, items should be the result of deliberation rather than straight from the mind of any one person.

- They then seal the secret criteria in an envelope and forget everything.

- Later, the core group invites a prospective new member to interview.

- The interview isn’t particularly well structured because no one knows what it’s looking for. So instead it’s a casual wide-ranging affair involving a range of activities that really have nothing to do with the community’s values. These activities are diverse and wide-ranging enough to reveal a variety of dimensions of the prospectives personality. An open-ended personality test or two could work as well. What you need is a broad activity pool that elicits a range of illuminating choices and behaviors. These are being observed by the membership committee members, but not discussed or acted upon until ….

- After the interview, a group of members sits to deliberate on the prospective’s membership, by

- collectively inducing anterograde amnesia,

- opening the envelope,

- recalling the prospective’s words and choices and behavior over the whole activity pool,

- judging all that against the temporarily revealed criteria,

- resealing the criteria in the envelope,

- writing down their decision, and then

- forgetting everything

- Later this membership committee reads the decision they came to to find out if they will be welcoming a new peer to the group.

The effect is that the candidate got admitted in a fair, systematic way that can’t be abused. Why does it work? No one knows how to abuse it. In a word, you can’t game a system if literally nobody knows what its rules are. Not knowing the rules that govern your society is normally a problem, but it seems to be just fine for membership rules, maybe because they are defined around discrete intermittent events.

Psychoactives in decision-making

If this sounds fanciful, it’s not: the sedatives propofol and midazolam both have this effect. They are common enough in the cocktails of sedatives, anesthetics, analgesics, and tranquilizers that anaesthesiologists administer during surgical procedures.

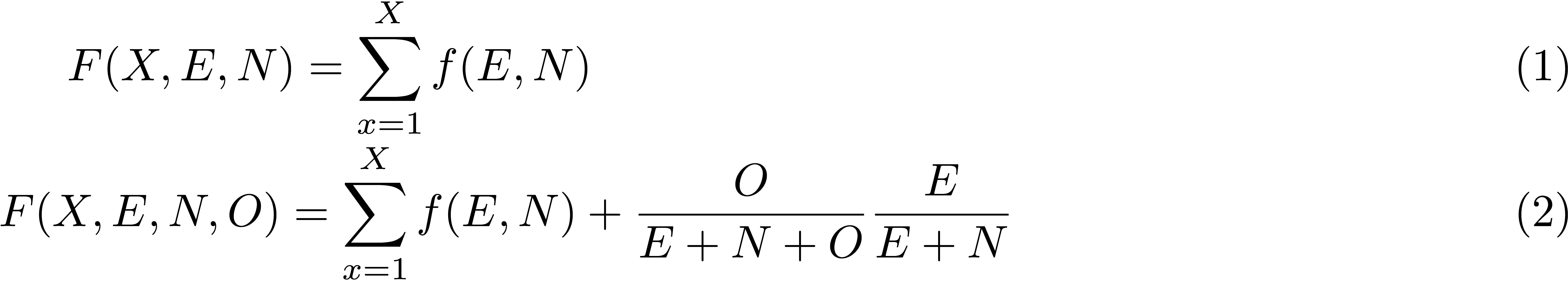

If this sounds feckless or reckless, it’s not. There is an actual heritage of research that uses psychoactives to understand decision-making. I’m a cognitive scientist who studies governance. I learned about midazolam from Prof Richard Shiffrin, a leading mathematical psychologist and expert in memory and decision-making. He invoked it while proposing a new kind of solution to a social dilemma game from economic game theory. In the social dilemma, two people can cooperate but each is tempted to defect. Shiffrin suggests that you’ll cooperate if the person is so similar to you that you know they’ll do whatever you do. He makes the point by introducing midazolam to make it so the other person is you. In Rich’s words:

You are engaged in the simple centipede game decision tree [Ed. if you know the Prisoner’s Dilemma, just imagine that] without communication. However the other agent is not some other rational agent, but is yourself. How? You make the decision under the drug midazolam which leaves your reasoning intact but prevents your memory for what you thought about or decided. Thus you decide what to do knowing the other is you making the other agent’s decision (you are not told and don’t know and care whether the other decision was made earlier or after because you don’t remember). Let us say that you are now playing the role of agent A, making the first choice. Your goal is to maximize your return as agent A, not yourself as agent B. When playing the role of agent B you are similarly trying to maximize your return.

The point is correlation of reasoning: Your decision both times is correlated, because you are you and presumably think similarly both times. If you believe it is right to defect, would you nonetheless give yourself the choice, knowing you would defect? Or knowing you would defect would you not choose (0,0)? On the other hand if you think it is correct to cooperate, would it not make sense to offer yourself the choice? When playing the role of B let us say you are given the choice – you gave yourself the choice believing you would cooperate – would you do so?

— a 2021/09/15 email

The upshot is that if you know nothing except that you are playing against yourself, you are more likely to cooperate because you know your opponent will do whatever you do, because they’re you. As he proposed it, it was a novel and creative solution to the problem of cooperation among self-interested people. And it’s useful outside of the narrow scenario it isolates. The idea of group identity is precisely that the boundaries of our conceptions of ourselves can expand to include others, so what looks like a funny idea about drugs is used by Shiffrin to offer a formal mechanism by which group identity improves cooperation.

Research at the intersection of drugs and decision-making isn’t restricted to thought experiments. For over a decade, behavioral economists in the neuroeconomics tradition have been piecing together the neurophysiology of decision-making by injecting subjects with a variety of endogenous and exogenous substances. For example, see this review of the effects of oxytocin, testosterone, arginine vasopressin, dopamine, serotonin, and stress hormones.

Compared this other work, all that’s unusual about this post is the idea of administering to a whole group instead of individuals.

Why save democracy when you can save dictatorship? | The connection to incentive alignment

This mechanism is serious for another reason too. The problem of membershipping is a special case of a much more general problem: “incentive alignment” (also known as “incentive compatibility”).

- When people answering a survey tell you what they want you hear instead of the truth

- When someone lies at an interview

- Just about any time that people aren’t incentivized to be transparent

Those are all examples of mis-alignment in the sense that individual incentives don’t point to system goals.

Incentive compatibility is especially challenging for survey design. That’s important because surveys are the least bad way to learn things about people in a standardized way. Incentive compatible survey design is a real can of worms.

That’s what’s special about double-blind policy. It’s a step in the direction of incentive compatibility for self-evaluation. You can’t lie about a question if nobody knows what was asked.

Quibbles

For all kinds of reasons this is not a full solution to the problem. One obvious problem: even if no one knows the rules, anyone can guess. The whole point of introducing midazolam into the social dilemma game was that you know that you will come to the same conclusions as yourself in the future. So just because you don’t know the criteria doesn’t mean you don’t “know” the criteria. You just guess what you would have suggested, and that’s probably it. To solve this, the double-blind policy mechanism has to be collaborative. It requires that several people participate, and that a collaborative deliberation process over many members will produce integrated or synergistic criteria that no single member would have thought of.

Other roles for psychoactives in governance design

The uses of psychoactives in community governance are, as far as I know, entirely unconsidered. Some cultures have developed ritualistic sharing of tobacco or alcohol to formalize an agreement. Others have developed ordering the disloyal to drink hemlock juice, a deadly choline antagonist. That’s all I can think of. I’m simultaneously intrigued to imagine what else is out there and baseline suspicious of anyone who tries.

The ethics

For me this is all one big thought experiment. But I live in the Bay Area, which is governed by strange laws like “The Pinocchio Law of The Bay” which states:

“All thought experiments want to go to the San Francisco Bay Area to become real.”

(I just made this up but it scans)

Hypothetically, I’m very pleased with the idea of solving governance problems psychoactives, but I’ll admit that it suffers from being awful-adjacent: It’s very very close to being awful. I see three things that could tip it over:

1) If you’re not careful it can sound pretty bad, especially to any audience that wants to hate it.

2) If you don’t know that the idea has a legitimate intellectual grounding in behavioral science, then it just sounds druggy and nuts.

3) If it’s presented without any mention of the potential for abuse then it’s naive and dangerous.

So let’s talk about the potential for abuse. The double-blind policy process with collective amnesia has serious potential for abuse. Non-consensual administration of memory drugs is inherently horrific. Consensual administration of memory drugs automatically spawns possibilities for non-consensual use. Even if it didn’t, consensual use itself is fraught, because what does that even mean? The framework of consent requires being able and informed. How able and informed are you when you can’t form new memories?

So any adoption or experimentation around this kind of mechanism should provide for secure storage and should come with a security protocol for every stage. Recording video or having observers who can see (but not hear?!) all deliberations could help. I haven’t thought more deeply than this, but the overall ethical strategy would go like this: You keep abuse potential from being the headline of this story by credibly internalizing the threat at all times, and by never being satisfied that you’ve internalized it enough. Expect something to go wrong and have a mechanism in place for nurturing it to the surface. Honestly there are very few communities that I’d trust to do this well. If you’re unsure you can do it well, you probably shouldn’t try. And if you’re certain you can do it well, then definitely don’t try.