When I was in grad school, first engaging with more established scientists, I was excited to share my ideas, and transmit my enthusiasm to others. “Yes, but why is that important?” I would hear. So I’d try again, explaining my idea. “By my does it matter?” So I’d try again, starting to get frustrated. I wasn’t getting the idea across. if I was asked how is it new, innovative, and important, I’d hear three different ways of asking the same question. What I was missing is that they’re specific distinct concepts.

It took forever but I eventually learned how to answer these questions, very much alone. As a result I win grants and get friends and colleagues and strangers excited about my work. My qualifications are a 5/5 record with the US National Science Foundation, which has a 5% acceptance rate, and several million in federal funding for risky interdisciplinary research projects. But I’ve lost something too. I write, edit, and review a lot of grants and have to train the people in my lab to write them well. But when a student or someone else in my lab shares an idea, my first answer is “Yes, but why is that important?” And when they try again, I ask “But why is it important?” It makes me wonder if I’ve learned anything, because do I really know the framework for convincing others if I can’t explain it to others and make them more convincing?

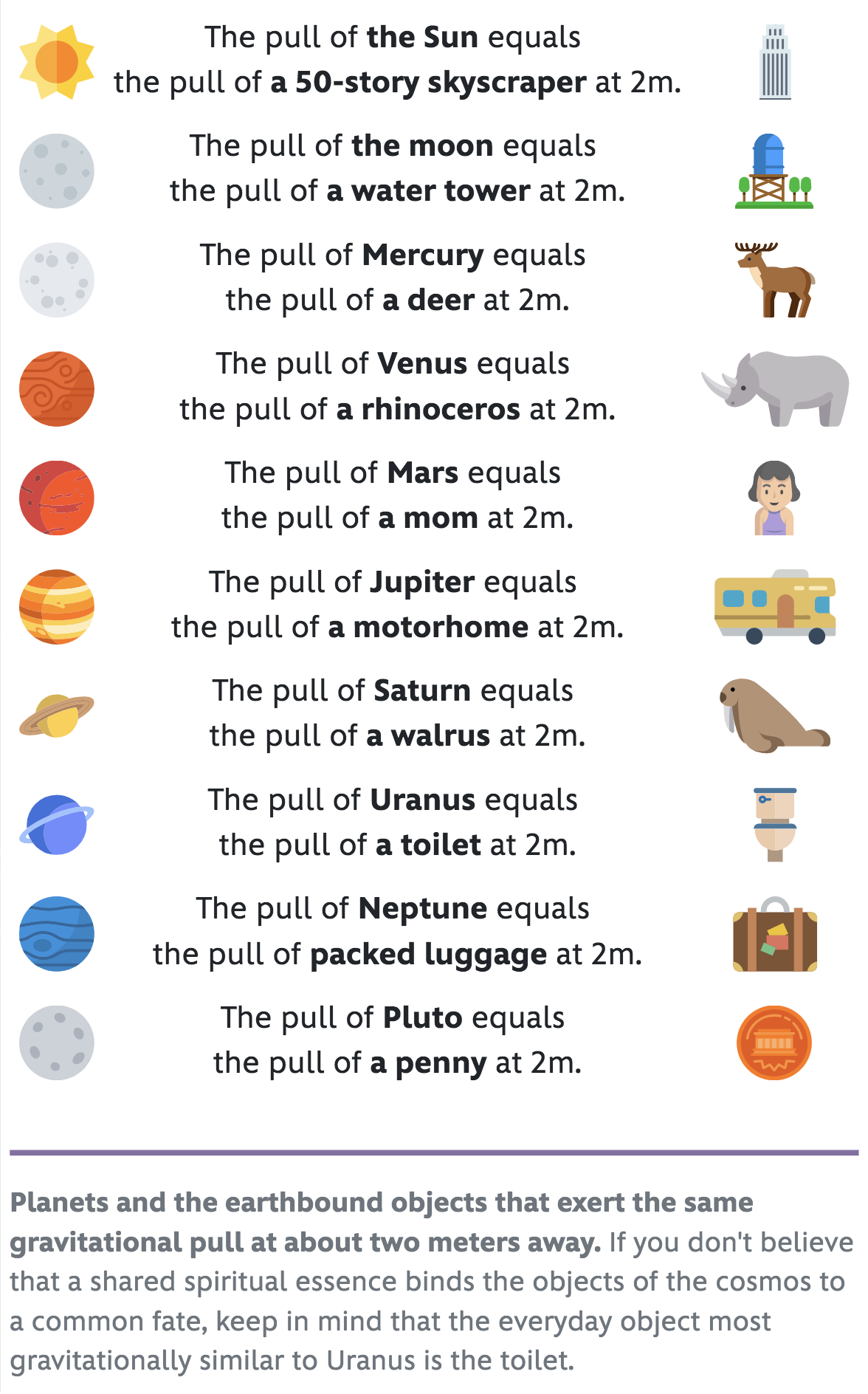

So I finally say down and tried to make sense of it all. There are a lot of overlapping words: novel, original, important/transformative, impactful, a contribution, feasible, well-designed. What’s the difference between your rationale, your objective, your approach and your rationale, or your aim and your research question? They each mean something and in very competitive grants you have to demonstrate each of them one by one, and how they come together. But how are they different? How do they relate and what’s the best order for them?

It’s important to answer because when we write, we tend to moosh them all into one field. But they have to be distinct, and you have to know which is which when. So it’s not enough to check all the boxes, you have to check on the right box and none other.

A landscape metaphor is suddenly helping me communicate the difference between all these seemingly saccharine and indistinguishable words. I imagine a single research project as part of a journey out from what everyone thinks to somewhere new. This makes it easier to make each part distinct.

This all might sound trite and obvious. But people often think that by describing the destination, the landscape, route, view, and distance, are all implied. They’re not. They’re each important to get into. Also, this breakdown unpacks single laden words (“gap”) into questions to answer (“what’s new?” “hasn’t this been done?” “why?” “what is everyone missing that you saw?”). If you see “novel” in an application, you can look here and see what questions are being implicitly asked, and then answer them like a mindreader.

Here is a breakdown, with the rough order that they tend to come up in grant applications.

- The landscape you’re in and its major features, geography, population centers, demographics, and natural history. Why is this general region important to understand. What industries does it support?

- (The landscape in the metaphor is “the subject area” of the grant and its stakes. Beyond setting up the case for the social/theoretical impact of the work, don’t actually spend too much time on this, just enough to establish your expertise, make everything that follows make sense)

- Landscape is distinct from Your destination, a description of the location you want to end up in

- (“the project and objectives“; what are you trying to accomplish? ). It will turn out that the destination isn’t special in itself, but only for the other things about it: it’s distance, it’s view, the path to it, and that you have inside is information on how to get there. So don’t cram everything into the destination, those are distinct things to establish in their own space, just share enough to make the following stuff make sense.

- Destination is distinct from The distance to the destination. It’s not exactly about distance, because this is just a metaphor. More basically it’s about establishing that the destination is a remote “there” and not a currently populated “here”; that the distance is in fact non-zero. What about the destination makes it a place that’s not already known and populated? Can you show that it’s not currently charted and that no one is currently there? Can you say why?

- (“the gap”. What’s kept everyone from already being where you are? is it far away from others, or close but obscured, or hard to perceive?)?,

- The view from the destination

- (“the impact”: what’s special about the new place you’re trying to go? what new things can you see from that vantage point (what questions can now be answered or, better, asked), ether looking forward to uncharted territory, or backward for a fresh perspective on what is known? Remember that no one’s been there before and only you have glimpsed it, so make them want it. “Intellectual merit” and “transformative potential” are about this.

- Also aside from what additional vistas it reveals, are there any resources at the detination that can be shipped back to where everyone is (real world applications, concrete problem solved; doesn’t have to be extractive, since knowledge is not subtractable). These are what makes the work “important”. this can also be shifted up before gap or down after approach),

- Your route. The path you’re taking there

- “The approach“. Are you taking a smart route to your destination? What makes it smart? Is it a shortcut? Does something about your approach makes you travel faster than others? Is it strategic in another way? What has bogged others down who have tried? Often when the word novelty appears, it’s specifically about approach. “Feasibility” is also about this. Sometimes the best way to show there’s a path is to reveal your current location, that you’re already following the path (“preliminary work”).

- This section requesting the approach is also where checkpoints, evaluation, measurement, and alternate routes/contingencies get described, just enough to build confidence that you’ve thought about it

- “The approach“. Are you taking a smart route to your destination? What makes it smart? Is it a shortcut? Does something about your approach makes you travel faster than others? Is it strategic in another way? What has bogged others down who have tried? Often when the word novelty appears, it’s specifically about approach. “Feasibility” is also about this. Sometimes the best way to show there’s a path is to reveal your current location, that you’re already following the path (“preliminary work”).

- Your preparations for the journey

- (“the team”, what about you and your group makes the journey doable, and especially doable by you? the best is to give a reader the sense that you’re the best people to do it, that no one else could, either because of your training, or because the originality of your exposition on the other parts shows that you’re the only person who’s really ready for the trip).

- Your compass. This doesn’t end up in the application, but you should also make sure that you want to make the trip, or at least be clear why you’re doing it. Sometimes people get so caught up in applying for money for the sake of having more money, when a small tweak can lead to a journey that will feel more like an adventure, and more in the direction you want to go.

Aside from being clear about each of these things, as if they aren’t implied by the others, its even better if you have insightful things to say about each: “Most people take this approach but it’s actually a dead end” “People don’t realize how much is over that peak” “Everyone thinks this area is well-explored but we found a hidden valley”. If you’re not original in most of your proposal, just in the method or the question, when highlight that and hope it’s enough to impress a committee. The more of these things that you can bring something original to, the better your chances at making people excited. It’s not just about money: it’s important to be good at making critical people excited about things. For me, this is how to do it.

This is just a draft, still needs polish, but hopefully useful. It is all informed by frameworks like Heilmeier Catechism, Ikigai, @mako’s Project Planning Doc, and the standard mix of fields on most grant applications, which can be hard to tell apart, and my experience stumbling through.

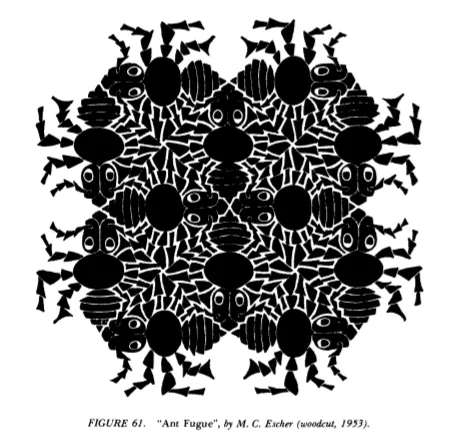

What’s the thing in your life that you’ve looked at more than anything else? Your walls? Your mom? Your hands? Not counting the backs of your eyelids, the right answer is your nose and brow. They’ve always been there, right in front of you, taking up a steady twentieth or so of your vision every waking moment.

What’s the thing in your life that you’ve looked at more than anything else? Your walls? Your mom? Your hands? Not counting the backs of your eyelids, the right answer is your nose and brow. They’ve always been there, right in front of you, taking up a steady twentieth or so of your vision every waking moment.